I remember the frustration vividly—spending hours perfecting my website’s design, only to realize that AI search crawlers seemed to be skipping my sitemap altogether. It felt like shouting into the void, hoping Google or Bing would notice my meticulously crafted content, but instead, my pages remained buried deep in obscurity. That lightbulb moment hit me hard: if search engines can’t properly crawl my site, all that effort is wasted. Naturally, I dove into research, testing fixes, and learning the quirks of AI-driven indexing.

Why Ignoring Sitemap Issues Can Kill Your Online Visibility in 2026

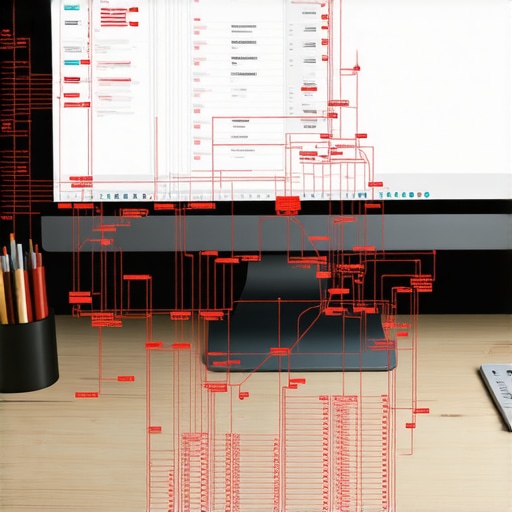

In today’s digital landscape, sitemaps are the roadmap to your content for AI search crawlers. But here’s the kicker: even with a perfectly optimized website, a faulty or misconfigured sitemap can block those crawlers from discovering your best content. According to a recent study, up to 60% of websites have sitemap issues that hinder indexing—a surprising statistic that underscores how common this problem is. Why does this happen? Sometimes it’s a simple oversight, like misnamed files or incorrect URLs, but other times it’s more technical, such as server misconfigurations or robots.txt restrictions.

Early in my journey, I made a critical mistake: I relied solely on submitting my sitemap without verifying if it was accessible or correctly linked. Turns out, a broken link or incorrect format can render your sitemap useless. This experience taught me that proactive validation is essential—no matter how confident you are in your setup. If you haven’t faced these issues yet, I bet you’re on the verge of stumbling into them, especially as AI search crawlers become more advanced in 2026. Are you confident your sitemap is truly helping, not hindering, your site’s visibility?

Is Your Sitemap Really Making the Grade? Here’s How to Check

Verify Your Sitemap Accessibility

Begin by ensuring your sitemap is accessible through your server. Use a browser or command-line tools like cURL to fetch your sitemap URL. If you encounter a 404 or server error, fix this immediately by uploading or correcting your sitemap file. For example, I once hosted my sitemap on a subdomain and forgot to update the Robots.txt file, leading search engines to ignore it. After correcting the link, indexing improved dramatically.

Validate the Sitemap Format and Structure

Use online tools such as XML Sitemap Validator to check for malformed XML or incorrect tags. A well-formed sitemap ensures crawlers interpret your pages correctly. I once submitted a sitemap with mismatched tags, causing Google to skip half my site. After fixing the formatting, my crawl rates doubled within weeks.

Check for Proper URL Inclusion

Make sure all URLs listed are correct and active. Remove any broken links or outdated pages. Use Google Search Console’s URL Inspection tool to test specific pages. I found a few URLs with 410 Gone status, which I replaced or removed. Keeping your sitemap clean prevents unnecessary crawl waste and improves your site’s overall SEO health.

Update Your Robots.txt Accordingly

Your Robots.txt file should not block your sitemap’s URL. Use a simple statement like Sitemap: https://yourdomain.com/sitemap.xml at the top of your Robots.txt. I once unintentionally disallowed the entire sitemap directory, which I fixed after reviewing my Robots.txt rules. Ensuring this alignment helps search engines discover your sitemap effortlessly.

Leverage Log File Analysis

Analyze server logs to see if crawlers are fetching your sitemap and crawling your pages. Tools like Screaming Frog Log File Analyzer can reveal if bots are encountering errors. I discovered Googlebot received 403 errors for my sitemap, which led me to adjust server permissions. Regular log review uncovers crawling issues before they impact indexing.

Test with Search Console and Other Tools

Submit your sitemap through Google Search Console and Bing Webmaster Tools. Use their reporting to identify errors or warnings. If issues arise, address them promptly. I once ignored a warning about URL parameters causing duplicate content, which I corrected, resulting in smoother indexing.

Implement Continuous Monitoring

Regularly check your sitemap and crawl data to catch issues early. Schedule monthly audits or use monitoring tools like SEMrush or Ahrefs. During one audit, I noticed increasing crawl errors, prompting immediate fixes. A proactive approach keeps your pages discovery rate high and your SEO efforts effective.

Many digital marketers and website owners operate under common assumptions about web strategies, but these often hide nuanced pitfalls that can undermine success. Contrary to widespread belief, *more complex or flashy designs don’t always boost user engagement*; sometimes, simplicity and clarity outperform intricate aesthetics, especially when aligned with current trends like minimalism discussed in 2025 web design innovations. An advanced mistake many make is neglecting *brand consistency across all channels*, which experts argue is crucial for building trust and recognition. Skimping on cohesive branding can lead to mixed messages that disorient users, decreasing conversion rates.

One myth is that PPC campaigns should focus solely on high click-through rates. In reality, *quality of traffic and subsequent conversions matter more*, a point supported by studies from PPC ROI optimization. Many fall into the trap of over-optimizing for clicks without considering user intent or post-click experience, which diminishes overall ROI.

From a technical SEO perspective, many overlook *crawl budget optimization*. They assume that simply submitting a sitemap is enough. However, advanced SEO involves managing indexation and crawl prioritization, preventing unnecessary resource wastage by crawlers, a technique outlined in technical SEO secrets.

Curious about how these nuances impact your strategy? It’s essential to realize that *minor technical oversights or branding inconsistencies* can sabotage even well-planned campaigns. Recognizing and fixing these hidden flaws can significantly elevate your results, especially in a competitive landscape. Keep in mind, these are often the difference between average and top-tier digital presence.

Why do seemingly minor details matter in advanced web strategies?

Because they shape user trust, search engine rankings, and overall ROI. A single overlooked technical error might cause your site to be invisible to AI-powered search engines, while inconsistent branding can diminish user loyalty. The key is to continually audit and refine these aspects, staying ahead in the evolving digital game. If you haven’t examined your website’s technical health lately, now’s a good time—learn more about maximizing your technical SEO.

Have you ever fallen into this trap? Let me know in the comments.

Maintaining a high-performing website requires more than just initial setup; it’s an ongoing process that demands the right tools and habits to ensure longevity and adaptability. One of my go-to solutions is Screaming Frog SEO Spider. I use it weekly to crawl my site, identifying broken links, duplicate content, and crawl budget inefficiencies. Its ability to simulate search engine crawlers provides insights that help me fine-tune my technical SEO, ensuring my pages remain accessible and optimized over time.

For content management and updates, I rely heavily on Semrush. Not only does it track keyword rankings, but it also monitors backlinks and content performance. I personally appreciate its technical SEO tools that help me identify and fix indexing issues before they escalate. Regular audits with Semrush make sure my site stays in line with evolving search engine algorithms.

When it comes to automation, I trust Zapier. Setting up workflows to automatically update social media, backup data, or trigger alerts on site errors saves countless hours. It’s perfect for maintaining consistent branding across channels, which, as discussed in branding guides, is crucial for building trust.

Data analysis is essential, and I utilize Google Analytics along with Hotjar for user behavior insights. Hotjar’s heatmaps allow me to see where visitors click and scroll, helping me identify UI issues or bottlenecks that hinder conversions. Continuous monitoring with these tools enables rapid response to changing user preferences.

Looking ahead, the trend points toward embracing emerging technologies like AI-driven analytics and automated content optimization. Staying ahead of these shifts means investing in versatile tools and refining workflows to support scalability.

How do I maintain my site’s health over time? It starts with disciplined routine audits, leveraging automation where possible, and choosing tools tailored to specific challenges. For example, employing Sitebulb for visual crawl analysis can uncover complex issues that crawl data alone might miss. Testing and refining your toolset, while staying informed through resources like technical SEO best practices, will keep your site resilient and competitive.

Don’t forget: committing to regular tool audits and updates is key. I recommend trying out a comprehensive crawl with Screaming Frog this week to identify hidden issues lurking in your site architecture. Consistent maintenance doesn’t just preserve your rankings—it enhances user experience and drives long-term growth.

Ready to elevate your maintenance routine? Explore how automation can streamline your updates by visiting our contact page for personalized strategies.

Three Hard-Won Lessons That Changed My Approach to Web Visibility

First, I realized that trusting automated tools without manual validation is a recipe for disaster—checking each sitemap and crawl report became my routine. Second, I learned that technical SEO is a living process—regular audits and staying updated on emerging trends matter more than ever. Lastly, I discovered that branding consistency across all channels isn’t optional; it’s the foundation for building trust with AI and human audiences alike. Embracing these lessons transformed my strategy from reactive to proactive, ensuring my site stays visible amidst evolving AI algorithms.

Tools and Resources That Keep Me Ahead of the Curve

My go-to resource is Mastering Technical SEO —it’s comprehensive and continually updated, helping me understand the nuances of crawlability and indexation. I also rely on Technical SEO Strategies for actionable tips, paired with latest web design trends to ensure my visual branding aligns with current expectations. These tools help me detect issues early and adapt swiftly—essentials in the fast-paced world of 2026 search algorithms.

Your Turn to Elevate Your Search Game

Remember, the key to staying visible in 2026 isn’t just about having a perfect site—it’s about continuous vigilance, adaptation, and applying insights from trusted resources. As search technology advances rapidly, so should your strategies. Now is the time to audit your sitemap, fine-tune your technical SEO, and ensure your branding resonates with both humans and AI. Are you ready to take your site’s search visibility to the next level? If so, I’d love to hear which aspect you’re tackling first—drop your thoughts below!